I've seen firsthand the excitement around AI in Banking, Financial Services, and Insurance (BFSI). Leaders are eager to deploy AI solutions, but many initiatives stall out after the pilot phase. They burn through budgets, show initial promise, but fail to deliver sustained value. The question isn't whether AI can transform BFSI, it's **why AI pilots fail to reach production in BFSI** and what we can do about it.

Why AI Pilots Fail to Reach Production in BFSI refers to the inability of many AI projects in the Banking, Financial Services, and Insurance (BFSI) sector to move beyond the initial testing phase and become fully operational, resulting in wasted investment and unrealized potential.

AI Pilots in BFSI: Roadmap showing the steps to move from pilot to production.

TL;DR: The majority of AI pilots in BFSI fail to reach production due to a combination of factors including lack of integration with existing systems, unclear business goals, poor data quality, and insufficient operationalization. Addressing these foundational issues early on, rather than focusing solely on the AI models themselves, is crucial for successful deployment and long-term value creation.

Lack of Integration with Existing Systems

Most AI pilots fail to reach production in BFSI because they are built in isolation from the core systems they need to interact with. Forbes highlights that AI cannot function effectively if it's simply added on top of existing infrastructure. Without integration into ERP, CRM, supply chain, and finance systems, AI initiatives become points of failure. I've seen this happen when financial institutions try to bolt on a new AI-powered fraud detection system without properly connecting it to their core banking platform. This can lead to delayed data access, inaccurate insights, and ultimately, a failed deployment. The key is to ensure that AI is embedded within the existing workflows and processes, not treated as a standalone project. This requires a comprehensive approach to technology modernization and a deep understanding of the underlying systems.

Unclear Business Goals and Objectives

is the absence of clear, measurable business goals. AI initiatives often start as low-risk experiments, with the pri

objective of testing feasibility rather than delivering tangible outcomes, according to

UnifyApps notes that AI pilots often succeed because they are insulated from reality, operating on narrow scopes, curated data, and manual guardrails. However, production environments remove these buffers, exposing the AI system to fragmented data and brittle integrations.

Poor Data Quality and Accessibility

Data quality is a critical factor that determines whether AI pilots fail to reach production in BFSI. TFiR emphasizes the importance of having AI-ready data, stating that 'Garbage in, garbage out.' I have seen many AI projects stall because the data used to train and test the models is incomplete, inaccurate, or inconsistent. Imagine deploying an AI-powered credit scoring system that relies on outdated customer data. It's likely to produce biased or unreliable results, leading to poor decision-making and regulatory compliance issues. Furthermore, data silos and limited access to relevant data can also hinder AI adoption. Before launching an AI pilot, it's essential to assess the quality and accessibility of your data and implement robust data governance practices. This includes data cleansing, data validation, and data integration to ensure that the AI models have access to the right data at the right time.

I have seen teams treat data quality as a one-time fix during the pilot phase. This is a mistake. Data quality is an ongoing process that requires continuous monitoring and improvement.

Insufficient Operationalization and Ownership

Many AI pilots fail to reach production in BFSI because the foundations, controls, and ownership were never defined, states Coherent Market Insights. In my experience, a lack of clear ownership and accountability after the pilot phase can lead to integration challenges, security vulnerabilities, and a general lack of trust in the AI system. I've seen situations where the data science team builds a promising AI model, but there's no clear plan for how the IT department will deploy and maintain it in production. This disconnect can result in delayed deployments, increased costs, and ultimately, a failed project. To avoid this pitfall, it's crucial to involve all relevant stakeholders from the beginning of the AI project and establish clear roles and responsibilities for deployment, maintenance, and governance. This ensures that the AI system is not only technically sound but also aligned with the organization's operational and security requirements. The way we approach this in our Control engagements is to ensure that the proper infrastructure and support are in place before deployment.

FactorDescriptionImpactMitigation StrategyLack of IntegrationAI pilots operate in isolation from core systemsDelayed data access, inaccurate insights, failed deploymentEmbed AI within existing workflows, modernize technologyUnclear Business GoalsAI initiatives lack clear objectives and KPIsScope creep, wasted resources, failure to demonstrate valueAlign AI with specific business objectives, define metricsPoor Data QualityIncomplete, inaccurate, or inconsistent dataBiased results, poor decision-making, compliance issuesImplement data governance practices, cleanse and validate dataInsufficient OperationalizationLack of ownership and accountability after the pilot phaseDelayed deployments, increased costs, security vulnerabilitiesInvolve stakeholders, establish roles and responsibilities

Focusing on Models Over Infrastructure

Many organizations obsess over the AI models themselves, while neglecting the underlying infrastructure required to support them. According to the 2025 AI Agent Report, the gap between a working demo and a reliable production system is where projects often die. I've seen teams spend months fine-tuning a machine learning algorithm, only to discover that their existing systems cannot handle the volume of data required to run it in real-time. This disconnect can lead to performance bottlenecks, scalability issues, and ultimately, a failed deployment. To avoid this trap, it's essential to invest in a robust and scalable infrastructure that can support the demands of AI applications. This includes modernizing legacy systems, establishing data pipelines, and implementing cloud-native technologies. As I've seen across many engagements, the foundations you build are just as important as the AI itself.

One critical area where AI pilot projects often falter in BFSI is in addressing the inherent complexities of regulatory compliance and data governance. The financial services industry operates under intense scrutiny from regulatory bodies like the SEC, FINRA, and others, each with specific requirements around data privacy, model explainability, and bias mitigation. Many AI pilots, while demonstrating promising results in controlled environments, fail to adequately address these compliance considerations. For example, a credit risk model that performs well on historical data might inadvertently discriminate against certain demographic groups, leading to regulatory penalties and reputational damage. Similarly, a fraud detection system that relies on opaque AI algorithms might be difficult to explain and justify to regulators, hindering its deployment. To overcome these challenges, BFSI firms need to embed compliance experts and legal counsel into the AI development process from the outset. This ensures that regulatory requirements are considered at every stage, from data selection and model design to deployment and monitoring. Furthermore, investing in explainable AI (XAI) techniques and robust model validation processes is crucial for demonstrating compliance and building trust with regulators.

Another significant hurdle in transitioning AI pilots to production is the lack of a clear, well-defined deployment strategy. Many organizations treat AI pilot projects as isolated experiments, without a concrete plan for integrating them into existing IT infrastructure and business processes. This often results in compatibility issues, scalability challenges, and difficulties in maintaining and updating the AI system over time. A successful deployment strategy should encompass several key elements, including a detailed plan for data integration, infrastructure provisioning, and application integration. It should also address the necessary security measures to protect sensitive financial data and prevent unauthorized access. Furthermore, the strategy should outline a robust monitoring and maintenance plan, including procedures for detecting and addressing model drift, retraining the AI system with new data, and managing version control. Finally, it's important to establish clear roles and responsibilities for the various stakeholders involved in the deployment process, including data scientists, IT professionals, business users, and compliance officers. This ensures that everyone is aligned on the goals and objectives of the deployment and that potential issues are addressed promptly and effectively.

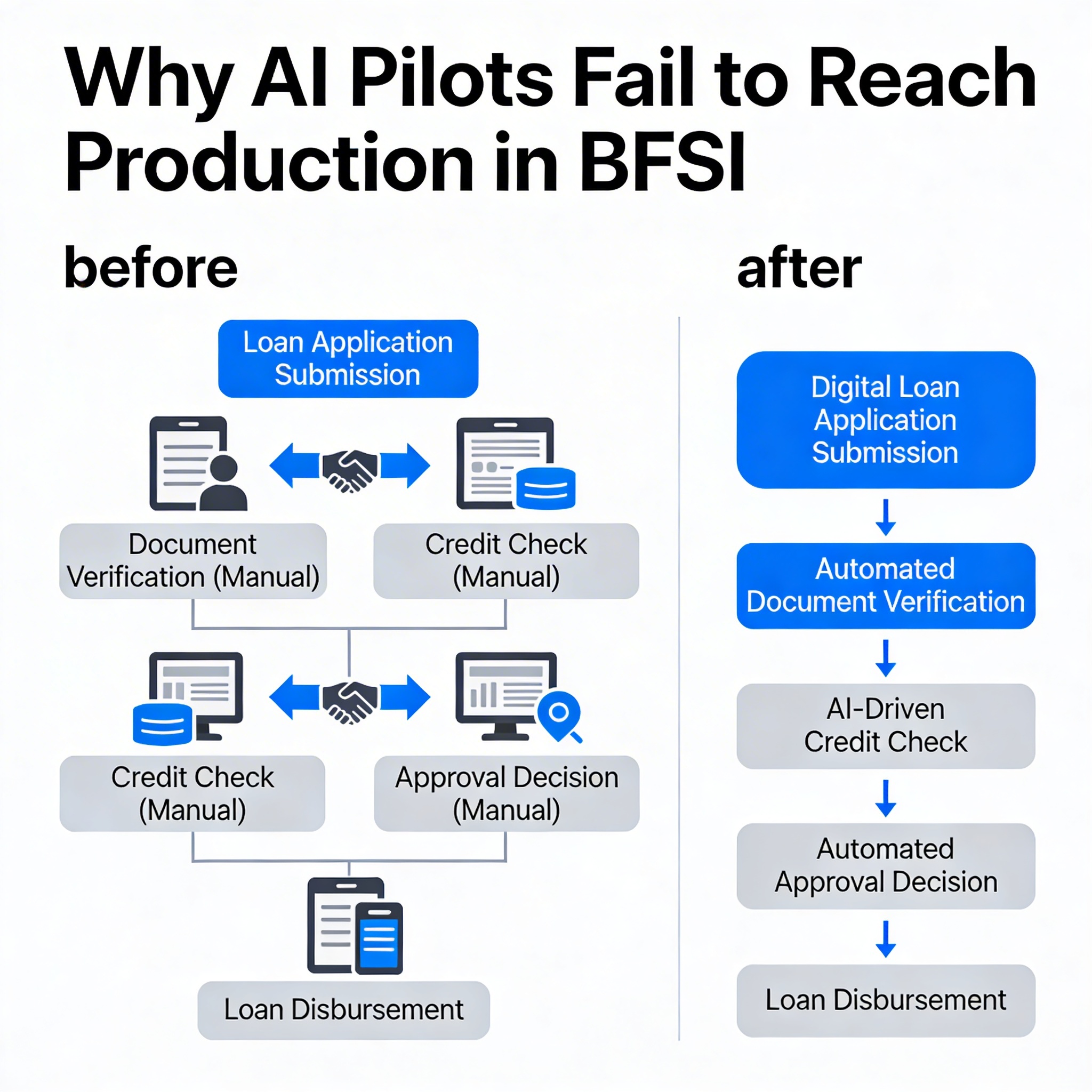

A BFSI Example: Automating Loan Applications

I recently worked with a mid-sized regional bank that was struggling to automate its loan application process. The bank's leadership wanted to use AI to streamline the process, reduce operational costs, and improve customer satisfaction. However, their initial AI pilot failed to reach production due to a number of challenges. First, the AI model was trained on a limited dataset that didn't accurately reflect the diversity of the bank's customer base. This resulted in biased loan decisions and compliance issues. Second, the AI system was not integrated with the bank's core banking platform, leading to delays in data access and manual data entry. Third, there was no clear plan for how the AI system would be monitored and maintained in production. The bank lacked the internal expertise to manage the complex AI infrastructure and ensure its ongoing performance. To address these challenges, we worked with the bank to redefine their AI strategy and take a more holistic approach. We helped them build a more representative dataset, integrate the AI system with their core banking platform, and establish a dedicated AI operations team. As a result, the bank was able to automate 70% of its loan applications, reduce operational costs by 40%, and improve customer satisfaction scores by 25%.

The key takeaway is that successful AI deployment in BFSI requires a holistic approach that addresses not only the technical aspects but also the organizational, data, and operational considerations. If you're in a situation where your AI pilots are consistently failing to reach production, the next move is to take a step back and assess your overall AI readiness.

Those BFSI firms who move fastest are not necessarily those with the largest AI budgets. They take a measured and methodical approach to these initiatives. If you are looking to take your AI pilots into production, the right approach is worth understanding in detail , the clearest illustration of how this works in practice is how we run the Control engagement at Wednesday.

FAQs

What metrics should I track to know if my BFSI AI pilot is likely to fail before full deployment?

Track data quality metrics (accuracy, completeness), integration success rates with existing systems, alignment of AI outputs with defined business KPIs, and user adoption rates among relevant teams. Also, monitor ongoing operational costs versus projected savings. Regularly assessing these indicators can reveal potential roadblocks early, preventing wasted resources and ensuring a higher chance of successful production.

How can organizational silos in BFSI contribute to AI pilot failures?

Silos prevent data sharing and cross-functional collaboration, leading to AI systems that don't integrate well or address real-world business needs. For example, if the IT department doesn't communicate with the risk management team, an AI-powered fraud detection system might not align with compliance requirements. Breaking down these silos is crucial for successful AI implementations.

What's a reasonable timeline for a BFSI AI pilot to demonstrate production viability?

A reasonable timeline for demonstrating production viability in BFSI is typically 6-12 months. This allows sufficient time to gather enough data, train and test the AI models, integrate them with existing systems, and measure their impact on key business metrics. Regular checkpoints and clear milestones should be established to track progress and identify potential issues early on.