I have watched founders lose sleep over the same question: how much should we actually spend on our MVP? It is not a trivial concern. The number you land on determines how many shots you get at product-market fit before your runway runs out. According to Helpware's 2026 analysis, the cost to build an MVP ranges from $10,000 to $200,000, depending on complexity and team factors. That range is so wide it is almost useless without context. Two founders can spend the same $50,000 and get completely different outcomes. One validates a business model. The other ships features nobody asked for. The difference is not the budget. It is whether the MVP cost was aligned with what the business actually needed to learn at that stage.

MVP cost is the total investment required to build a minimum viable product that validates a core business hypothesis with real users. It encompasses design, development, infrastructure, and validation work, and typically ranges from $10,000 to over $200,000 depending on complexity, team composition, and the number of assumptions being tested. The strategic question is not how much it costs, but whether the investment validates the right hypothesis.

Strategic MVP cost planning illustration for startup software development

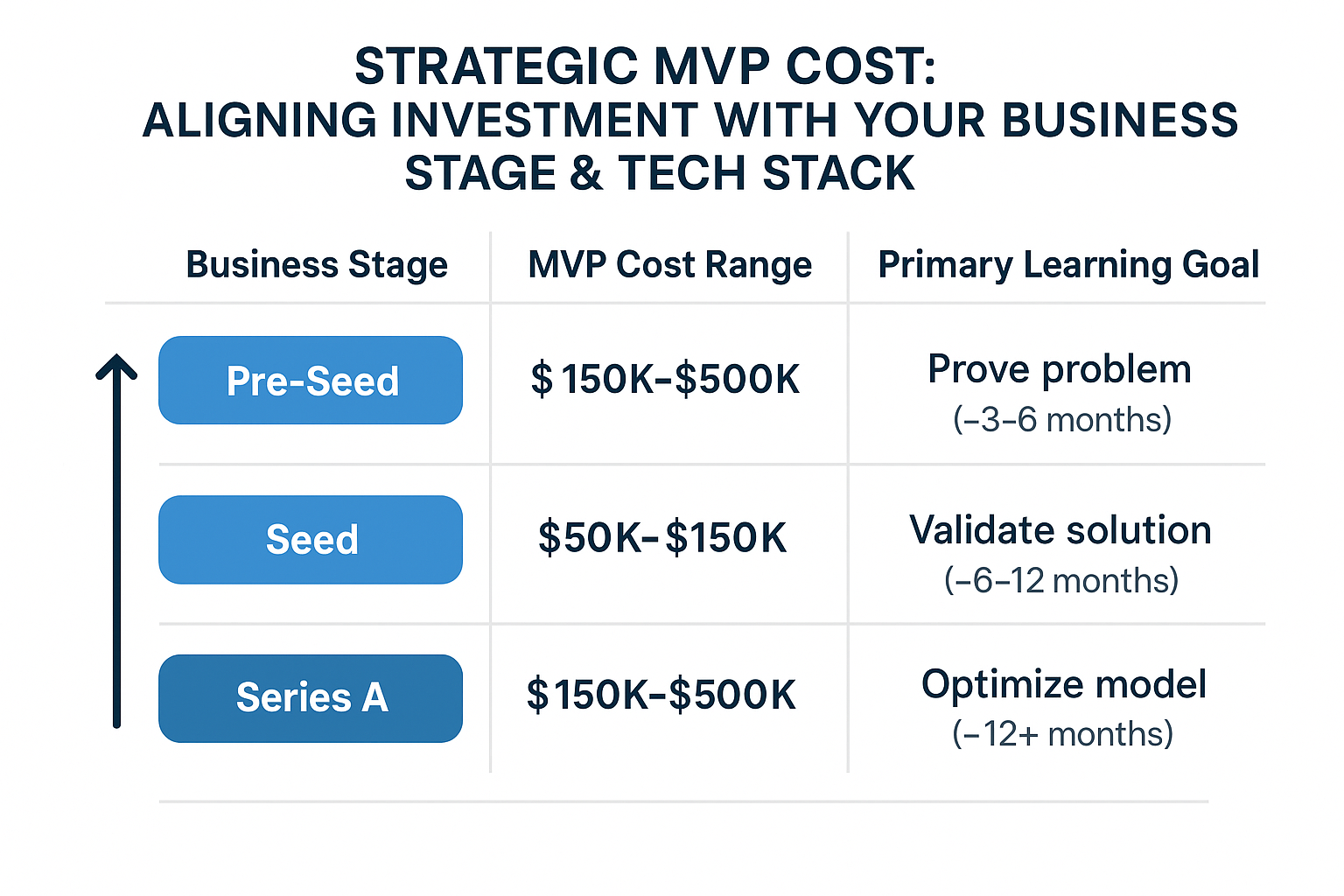

TL;DR: MVP cost varies dramatically by business stage, not just by feature count. Pre-seed teams should spend $10K-$30K validating a single core assumption, while Series A companies may invest $100K-$200K to prove product-market fit with production-grade infrastructure. The most common mistake is matching budget to ambition instead of matching budget to the specific learning goal at each stage. Teams that align spending with validation objectives reduce wasted development by 40-60% compared to feature-driven budgeting.

Why your business stage determines your MVP budget

I have worked with founders at every stage, from pre-idea to Series A, and the single biggest cost mistake I see is treating MVP cost as a feature problem. Founders list the features they want, estimate hours, multiply by a rate, and arrive at a number. That number is almost always wrong, because it ignores the most important variable: what stage the business is in and what it needs to learn next.

A pre-seed startup needs to validate one core assumption as cheaply as possible. A seed-stage company needs to prove that users will return without being begged. A Series A company needs to demonstrate product-market fit with clear unit economics. These are fundamentally different goals, and they require fundamentally different MVP investments.

Across dozens of engagements, I have seen the pattern repeat: teams that match their MVP cost to their business stage ship faster and waste less money. Teams that try to build a Series A product on a pre-seed budget, or the reverse, burn through capital without getting the learning they need.

The right approach is to define your learning goal first, then work backward to the MVP scope and cost. If you are pre-seed, your learning goal is singular: does anyone want this? The MVP that answers that question should cost a fraction of what a full product would cost, because you are not building a product. You are testing a hypothesis.

What actually drives MVP cost (it's not what you think)

Most teams estimate MVP cost by listing features and assigning hourly rates. I have done this dozens of times, and it consistently produces wrong answers. The real cost drivers are structural: how many business assumptions need validation, whether you have existing users or are starting from zero, what third-party integrations are required, and whether you need to handle regulatory or compliance data.

A simple dashboard with user authentication might cost $15,000. The same dashboard integrated with three external APIs, real-time data sync, and role-based access control might cost $60,000. The feature list looks similar. The underlying complexity does not.

This is where most teams get it wrong. They compare MVP cost across projects based on surface-level features. A marketplace MVP that validates both supply and demand sides costs differently than a SaaS tool that validates a single workflow, because the number of assumptions determines the validation work, which determines the design, development, and testing investment.

When a founder shows me a feature list and asks for a cost estimate, the first question I ask is: what assumptions are you trying to validate? That answer changes everything about what the MVP should include and what it should cost.

How your tech stack compounds MVP cost over time

I learned this the hard way on a project where we chose a trendy serverless architecture for an MVP that needed frequent iteration. The initial build was fast and cheap. But every change required redeploying functions, debugging cold starts, and managing distributed state. What should have been a two-week iteration cycle turned into four weeks. The tech stack that saved money on day one cost us months by month three.

The tech stack decision has cost implications that compound. Choosing between a monolith and microservices, between SQL and NoSQL, between native and cross-platform mobile, between self-hosted and managed infrastructure, these decisions affect not just the initial MVP cost but the cost of every subsequent iteration.

For early-stage MVPs, I recommend boring technology. A proven framework with a large talent pool, a database your team has used before, and infrastructure that does not require a dedicated DevOps engineer. The goal is learning velocity, not architectural elegance. Save the sophisticated stack decisions for when you know what you are building and why.

When we approach this in our Launch engagements, we design architecture for learning, not for scale. The technical decisions are made to maximize iteration speed and minimize the cost of changing direction, because at the MVP stage, changing direction is the most likely outcome.

The most important metric for MVP cost is not the total budget. It is learning efficiency per dollar spent. A $20,000 MVP that definitively kills a bad idea is more valuable than a $200,000 MVP that leaves the team uncertain about product-market fit.

A practical framework for aligning MVP cost with your stage

I use a three-step framework with every founder who asks about MVP cost. It is not complicated, but it requires discipline, because it means saying no to features that feel important but do not serve the learning goal.

Step one: define the single most important assumption your business depends on. Not three assumptions. Not five. One. For a marketplace, it might be whether supply will show up before demand. For a SaaS tool, it might be whether users will pay for automated reporting. This assumption determines the MVP scope.

Step two: scope the smallest possible product that tests that assumption. This is where discipline matters. Every feature that does not directly test the core assumption is a distraction. I have seen founders add user profiles, settings pages, and notification systems to MVPs that were supposed to test a single workflow. Each addition doubles the cost and halves the learning velocity.

Step three: set a budget ceiling based on your stage and runway, not on the feature list. If you have 18 months of runway, spending 30 percent on a single MVP iteration is reckless. If you have six months, spending 15 percent on a fast validation sprint might be the smartest move you make.

The founders who move fastest are the ones who stop guessing and start validating. If you are building with raised capital and still don't know which bets to double down on, the sprint model is worth understanding in detail. The clearest illustration of how this works in practice is how we run the Launch engagement at Wednesday, where every sprint is designed to test a specific business outcome, not just ship code.

Looking to accelerate your engineering delivery? Wednesday Solutions helps engineering teams ship faster and more reliably.

The sprint model operationalizes this validation-first mindset by breaking down the product journey into discrete, time-boxed experiments. A typical validation sprint isn't about building a full feature; it's about constructing the smallest possible artifact—be it a clickable prototype, a concierge MVP, or a targeted landing page—that can test a core business assumption. For instance, instead of spending $50,000 to build a full recommendation engine, a two-week sprint might spend $5,000 to manually curate recommendations for 100 users via email, measuring engagement and conversion lift. This approach directly tackles the highest-risk assumption (will users act on recommendations?) before a single line of algorithm code is written. The capital saved isn't just the $45,000 in immediate development costs; it's the months of runway preserved and the clarity gained to either pivot the strategy or double down with confidence.

Implementing this requires a rigorous, almost scientific, framework for each sprint. It begins with a clearly articulated hypothesis: "We believe that [user segment] will [take this action] because [this underlying need], and we will know we're right if we see [this measurable metric] move by [X%] within [this timeframe]." The subsequent build is then ruthlessly scoped to only what is necessary to generate reliable data for that metric. This often means embracing "Wizard of Oz" or "manual backend" techniques that feel unscalable but are brilliant for learning. For example, a fintech startup testing a new budgeting insight might have an analyst manually generate the insights for the first 50 users instead of building an AI pipeline. The cost is a few hours of analyst time versus months of data science work. The key is that the user experience feels real, providing valid behavioral data, while the backend is intentionally lean. This disciplined scoping is what separates a strategic validation sprint from a poorly planned mini-project that still bleeds resources.

Ultimately, this methodology reframes the entire conversation around MVP cost. The question shifts from "How much will it cost to build my vision?" to "What is the most capital-efficient way to de-risk my next critical assumption?" The budget becomes a tool for purchasing information, not just a list of features. A well-run sprint portfolio might allocate 70% of the initial budget to these rapid validation cycles, with the remaining 30% reserved for building the first scalable iteration of the product once the core value proposition and growth levers are empirically proven. This ensures that when significant capital is deployed for full-scale development, it is invested in a product concept that has already demonstrated market pull, dramatically increasing the probability of success and ensuring every dollar spent is building on a foundation of validated learning, not hopeful speculation.

How a pre-seed HR tech startup validated their idea for $18K instead of $120K

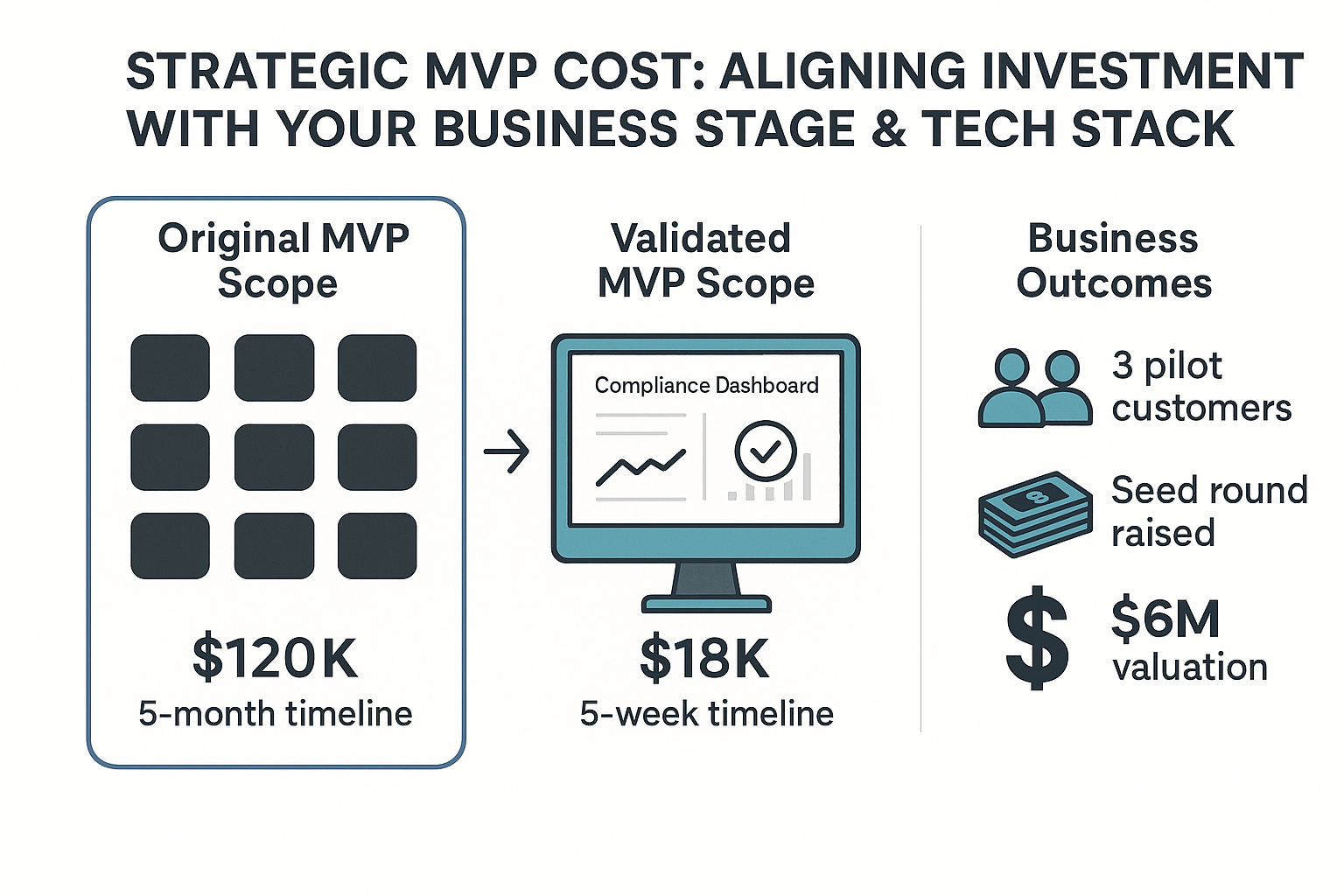

A founder came to us with a pre-seed HR tech startup and a $120,000 MVP budget. The original scope included eight features: employee onboarding, compliance tracking, document management, reporting dashboards, team directory, leave management, performance reviews, and an admin panel. The founder had received quotes from three agencies ranging from $100,000 to $150,000 and was ready to sign.

Before committing, we ran a structured thinking sprint to identify the core assumption. After interviewing twelve HR managers at mid-size companies, we found that the real pain point was not automated compliance tracking, as the founder assumed. It was the inability to see compliance status across all employees in one place. The existing tools forced managers to check five different systems.

This single insight changed the MVP entirely. Instead of building eight features, we built one: a unified compliance dashboard that pulled data from existing tools via API. The MVP cost was $18,000 and took five weeks. The founder validated that HR managers would pay for this solution within two weeks of launch, signing three pilot customers at $500 per month.

Based on the validation data, the founder redesigned the product roadmap, raised a seed round at a $6 million valuation, and built the full product with evidence-backed confidence. The total MVP cost was 7x less than the original budget, and the learning was more valuable than a full build would have been.

The most important decision you will make about MVP cost is not the number. It is the question behind the number: what are you trying to learn, and what is the cheapest way to learn it? If you are pre-seed, spend less than $30,000 and validate one assumption. If you are seed-stage, invest in a core workflow that proves users will return. If you are Series A, build the infrastructure that supports product-market fit at scale. The wrong move at any stage is the same: spending money to build features instead of spending money to reduce uncertainty.

The founders who move fastest are the ones who stop guessing and start validating. If you are building with raised capital and still don't know which bets to double down on, the sprint model is worth understanding in detail. The clearest illustration of how this works in practice is how we run the Launch engagement at Wednesday, where every sprint is designed to test a specific business outcome, not just ship code.

FAQs

What is a realistic MVP cost for a funded startup?

For seed-stage startups, a realistic MVP investment is between $30,000 and $80,000, covering a core workflow that proves users will return to the product. Series A companies typically invest $80,000 to $200,000 to build production-grade infrastructure that supports product-market fit at scale. The right number depends less on feature count and more on how many business assumptions need validation and what level of reliability real users require.

What drives MVP cost up most unexpectedly?

Third-party API integrations, compliance and regulatory data handling, and real-time data synchronisation are the three factors most founders underestimate. A dashboard with user authentication might cost $15,000. The same dashboard integrated with three external APIs and role-based access control can cost $60,000. The feature surface looks similar — the underlying integration complexity does not.

Should you build an MVP or validate with a prototype first?

It depends on which assumption carries the most risk. If the core question is whether users want the product at all, a clickable prototype or concierge MVP (manually delivering the service) costs a fraction of a coded build and answers the question faster. If the core question is whether the technical approach is feasible, you need working code. Most early-stage founders should spend one to two weeks validating demand before committing budget to development.

How do you know when your MVP has cost too much?

When you have spent more than 30% of your runway without clear evidence of user demand or willingness to pay. A well-scoped MVP should produce a definitive answer — positive or negative — within 6 to 12 weeks. If the team is still debating what to build after that, the MVP scope was wrong, not the budget.

Last updated: March 17, 2026